|

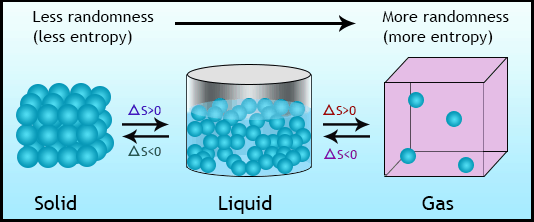

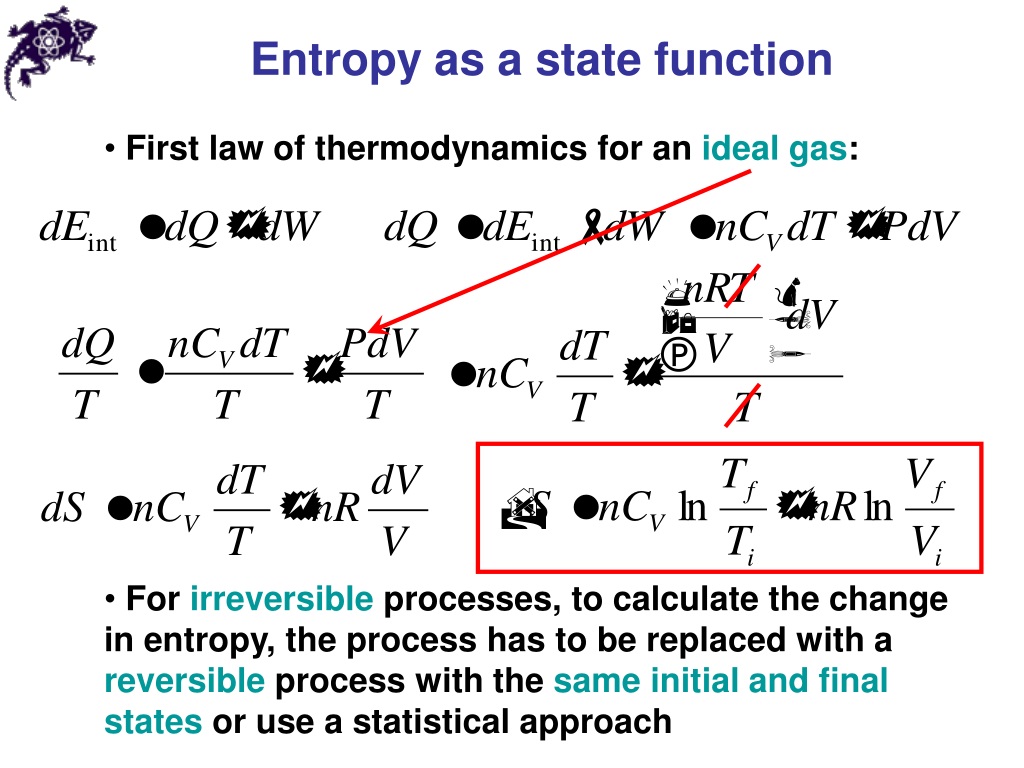

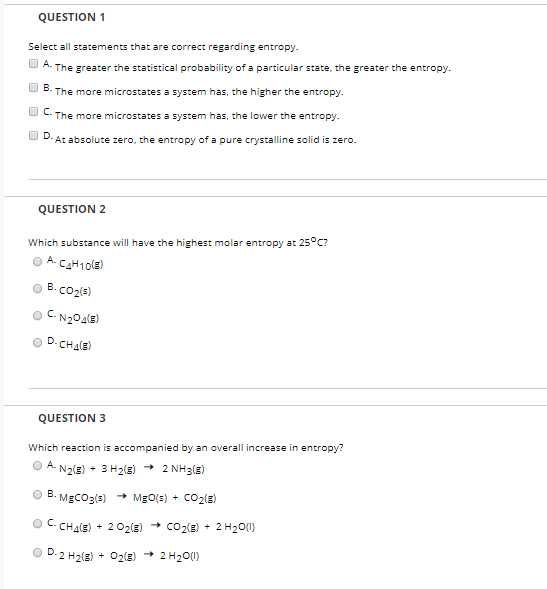

However, it is found that, for all processes for which entropy changes can be calculated by using Boltzmann’s definition, the results agree with entropy changes calculated using Clausius’s definition. The Boltzmann definition seems to be completely unrelated to the Clausius definition. The extension of Boltzmann’s definition to systems characterized by independent variables other than the ( E, V, N ) is attributed to Gibbs. It relates the entropy of a system to the total number of accessible micro-states of a thermodynamic system characterized macroscopically by the total energy E, volume V, and total number of particles N. This definition is sometimes referred to as either the microscopic definition of entropy. The second definition is attributed to Boltzmann. Clausius’s definition, together with the Third Law of Thermodynamics, led to the calculation of “absolute values” of the entropy of many substances. In reality, Clausius did not define entropy, but rather only changes in entropy. The introduction of entropy into the vocabulary of physics is attributed to Clausius. The first definition originated in the 19th century, stemming from the interest in heat engines. In the following sections we shall introduce three definitions of entropy. I will not discuss these here, since they were discussed at great length in a several previous publications. However, it is believed that these three definitions are indeed equivalent although no formal proof of this is available.Īt this point, it is appropriate to note that there are many other “definitions” of entropy, which are not equivalent to either one of the three definitions discussed in this article. Therefore, we cannot claim equivalency in general. Note carefully, that there are many processes for which we cannot calculate the pertinent, entropy changes. By “different” we mean that the definitions do not follow from each other, specifically, neither Boltzmann’s, nor the one based on Shannon’s measure of information (SMI), can be “derived” from Clausius’s definition.īy “equivalent” we mean that, for any process for which we can calculate the change in entropy, we obtain the same results by using the three definitions. In this Section we briefly present three different, but equivalent definitions of entropy. Entropy changes are meaningful only for well-defined thermodynamic processes in systems for which the entropy is defined. Therefore, one cannot claim that it increases or decreases. In this article, we show that statements like “ entropy always increases” are meaningless entropy, in itself does not have a numerical value. The statement “ entropy always increases,” implicitly means that “ entropy always increases with time”. The explicit association of entropy with time is due to Eddington, which we shall discuss in the next section.

The origin of this association of “Time’s Arrow” with entropy can be traced to Clausius’ famous statement of the Second Law : “ The entropy of the universe always increases”. Open any book which deals with a “theory of time,” “time’s beginning,” and “time’s ending,” and you are likely to find the association of entropy and the Second Law of Thermodynamics with time. Introduction: Three Different but Equivalent Definitions of Entropy p 2) = I( p 1) + I( p 2): the information learned from independent events is the sum of the information learned from each event.1.I(1) = 0: events that always occur do not communicate information.I( p) is monotonically decreasing in p: an increase in the probability of an event decreases the information from an observed event, and vice versa.The amount of information acquired due to the observation of event i follows from Shannon's solution of the fundamental properties of information: To understand the meaning of −Σ p i log( p i), first define an information function I in terms of an event i with probability p i.

This ratio is called metric entropy and is a measure of the randomness of the information. : 14–15Įntropy can be normalized by dividing it by information length. The entropy is zero: each toss of the coin delivers no new information as the outcome of each coin toss is always certain. The extreme case is that of a double-headed coin that never comes up tails, or a double-tailed coin that never results in a head. Entropy, then, can only decrease from the value associated with uniform probability.

Uniform probability yields maximum uncertainty and therefore maximum entropy.

H ( X ) := − ∑ x ∈ X p ( x ) log p ( x ) = E,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed